Real-World Applications¶

From Concept to Deployment¶

The concepts explored so far — architectural separation, portable execution, sandboxed workloads, and lifecycle control — describe a model for how Edge systems can be designed to evolve over time.

Up to this point, the discussion has been largely conceptual.

These ideas, however, are not theoretical. They have been applied in real-world environments, where systems are required to operate reliably, adapt to changing conditions, and support evolving functionality over extended periods of time.

Moving from concept to deployment introduces a different set of considerations.

Architectural principles must be translated into working systems. Execution models must operate within the constraints of real devices. Lifecycle processes must function across distributed fleets, often with limited connectivity and limited opportunities for direct intervention.

In this context, the value of the model is not defined by its elegance, but by its ability to function under real conditions.

This includes environments where devices are exposed to varying operational contexts, where updates must be applied without disruption, and where system behavior must remain predictable even as functionality evolves.

It also includes scenarios where multiple stakeholders interact with the same system — developers, operators, and integrators — each with different requirements and responsibilities.

The transition from static firmware to dynamic workloads becomes more than a design choice. It becomes an operational requirement. Similarly, the introduction of sandboxed execution and controlled interaction is not only about improving structure.

It is about ensuring that systems remain stable as they change.

Lifecycle management, in turn, becomes the mechanism through which this change is coordinated — governing how updates are introduced, how behavior is monitored, and how control is maintained across devices over time.

Taken together, these elements form the basis of a system that can be deployed, operated, and evolved in practice.

The Role of RIoT Secure¶

Translating architectural principles into operational systems requires more than design alone. It requires an underlying structure that can support how those systems are deployed, managed, and evolved over time.

The concepts introduced in earlier — separation of concerns, controlled execution, and lifecycle management — depend on the presence of such a structure. Without it, they remain difficult to apply consistently across devices, environments, and deployments. RIoT Secure was developed in response to this need.

Its focus has been on enabling Edge systems to operate within a model where execution, communication, and lifecycle processes are coordinated, rather than treated as separate concerns.

This includes the ability to manage workloads over time, maintain controlled interaction between system components, and support long-term operation under real-world conditions.

The development of this approach has been shaped through practical application.

As part of an initiative supported by EIT Digital, RIoT Secure contributed to a multi-year effort aimed at advancing secure IoT infrastructure. This work involved collaboration across multiple stakeholders and provided the context in which early concepts could be extended into production-level systems.

The principles described throughout this handbook were applied in practice.

Architectural separation was introduced at the device level, execution was constrained through controlled environments, and lifecycle processes were established to manage behavior across deployments.

These elements were not considered in isolation, but as part of a system that needed to function reliably over time.

The result of this work was a set of deployments in which these ideas could be evaluated under real conditions. Devices were installed, operated, and maintained across varying environments. Updates were applied as systems evolved. Behavior was monitored and adjusted as requirements changed.

This experience provides the basis for the perspective presented here. The challenges discussed are drawn from real deployments.

The architectural approaches reflect systems that have been implemented and the execution models correspond to environments that have been operated over time.

A Platform Built for Lifecycle at the Edge¶

The ability to deploy, execute, and evolve workloads over time requires more than isolated mechanisms. It depends on the presence of a platform through which these processes can be coordinated.

In environments where devices operate under constrained conditions and over extended lifetimes, this coordination cannot be assumed. It must be established as part of the system itself, providing a consistent way to manage how functionality is introduced, how behavior is controlled, and how change is applied.

This has taken the form of a platform designed to support lifecycle management at the edge. Such a platform does not exist as a single component. It is composed of several elements that operate together.

At the device level, mechanisms are required to enforce separation and provide controlled execution environments. This includes the ability to isolate responsibilities, constrain interaction, and support the execution of workloads in a predictable manner.

At the communication level, secure and reliable channels must be established to allow devices to exchange data and receive updates. These channels must operate under varying network conditions, while maintaining integrity and control over what is transmitted and received.

At the operational level, processes must exist to manage how systems evolve over time. This includes the delivery of updates, the monitoring of device behavior, and the enforcement of policies that govern how and when changes are applied.

These elements are interdependent. Execution depends on communication. Lifecycle processes depend on execution. Control depends on all three. In isolation, each of these capabilities can be implemented. In practice, their value emerges when they are integrated.

A platform built for lifecycle at the edge provides this integration.

It establishes a consistent structure through which devices can be deployed, managed, and evolved, while maintaining the constraints required for reliable operation. This does not eliminate the complexity of Edge systems. It provides a way to manage it.

Within this framework, the concepts introduced earlier — workloads, sandboxed execution, and controlled interaction — can be applied in a way that supports real-world deployments.

The following examine how these principles have been applied in real deployments, and what they reveal about building and managing Edge systems under real-world conditions.

Industrial AI at the Edge: Comau¶

Comau is a global provider of industrial automation and robotics solutions, with a long history of delivering systems for automotive manufacturing environments. Its work spans highly controlled production settings, where reliability, predictability, and long-term operation are fundamental requirements.

Within this context, the introduction of artificial intelligence at the edge presents both an opportunity and a challenge.

In collaboration with an internal team at Comau, an initiative was undertaken to explore how machine-learning–based capabilities could be deployed directly on embedded systems operating near robotic equipment.

The focus was on movement analysis, vibration monitoring, and anomaly detection — using sensor data to identify patterns and deviations in real time.

These models were intended to operate directly alongside robotic systems, processing sensor input in real time to detect deviations in movement patterns, unexpected vibration signatures, or early indicators of mechanical wear.

In this context, the value of the models was not only in their accuracy, but in their ability to operate continuously, close to the source of the data, without introducing latency or reliance on external systems.

The objective was to bring intelligence closer to the machines, allowing decisions to be made locally, without relying on centralized processing. The development of the models themselves was not the limiting factor.

A team of data scientists was already able to design and train models using familiar tools and frameworks. These models could be adapted to run in constrained environments using TensorFlow Lite, and were capable of performing the required analysis.

The challenge, however, lay elsewhere.

It was not in the development of the models, but in bridging the gap between model development and embedded system deployment. Moving from a trained model to a system that could operate reliably on constrained hardware, under real-time conditions, introduced a different set of requirements.

The target systems needed to maintain predictable behavior, support safe updates, and operate reliably over extended periods of time. At the same time, the engineers responsible for the models were not embedded systems specialists, firmware developers, or security engineers.

Requiring them to manage communication, updates, or cryptographic mechanisms would have introduced additional complexity and risk. What was needed was a way to allow AI development to proceed independently, without coupling it to the underlying concerns of device management and lifecycle control.

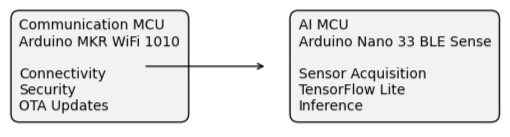

The approach taken was to introduce a clear separation of responsibilities at the hardware level.

The application layer, responsible for sensor acquisition and model inference, was deployed on a dedicated microcontroller. This environment was fully controlled by the application developers, allowing them to use their existing tools, workflows, and libraries without interference from other system concerns.

In this deployment, the application was implemented on an Arduino Nano 33 BLE Sense, based on the Nordic nRF52840 series, providing a compact platform for sensor acquisition and TensorFlow Lite inference.

In practice, this meant that the microcontroller running the AI workload was entirely dedicated to sensor processing and inference, with no competing responsibilities related to communication, security, or system management.

In parallel, communication, security, and lifecycle management were handled by a separate execution context. This layer was responsible for device provisioning, secure communication, update delivery, and operational control, operating independently from the application logic.

These responsibilities were handled by a separate Arduino MKR WiFi 1010 module, which managed connectivity, secure communication, and lifecycle operations independently of the application microcontroller.

Figure 16: Hardware Separation for Edge AI Deployment

The interaction between these layers was deliberately constrained.

The application could focus on processing data and executing models. The supporting infrastructure managed how the system communicated, how it evolved, and how it remained secure over time.

This separation allowed the two domains to evolve independently.

Model development and iteration could proceed without being impacted by connectivity or lifecycle concerns. At the same time, updates and operational control could be applied without affecting the real-time behavior of the application.

In practice, this enabled a workflow in which models could be developed and tested in isolation, and then deployed into a production environment without requiring significant changes to the application itself.

This approach reflects a broader principle.

AI systems at the edge are most effective when the responsibilities of application logic and system management are not combined.

When these concerns are separated, each can be addressed using the appropriate tools, expertise, and processes.

The result is not only a more manageable system, but one that can support continuous evolution without compromising stability. It also allows AI development to follow its natural cycle of experimentation, refinement, and iteration, without being constrained by the underlying complexity of embedded systems or lifecycle management.

| Area | Description |

|---|---|

| Deployment Context | Industrial robotics and manufacturing environments |

| Primary Objective | Real-time movement analysis, vibration monitoring, and anomaly detection |

| AI Workload | TensorFlow Lite inference operating on embedded sensor data |

| Hardware Architecture | Dual-microcontroller model with separated execution domains |

| Application Platform | Arduino Nano 33 BLE Sense (nRF52840) |

| Lifecycle & Connectivity | Arduino MKR WiFi 1010 handling communication, security, and lifecycle operations |

| RIoT Secure Components | µTLS, FUSION, OASIS |

| Operational Challenge Addressed | Separating AI development workflows from communication, security, and lifecycle management |

| Key Outcome | Enabled independent evolution of AI workloads while maintaining stable operational infrastructure |

While the Comau deployment highlights the challenges of enabling AI development on constrained devices, the following example illustrates how similar principles apply in a fully operational, safety-critical environment.

Edge AI in Operation: SAS Ground Service Handling¶

Scandinavian Airlines (SAS), in collaboration with GreatSway Enterprises, operated within a highly regulated and safety-critical environment at Stockholm Arlanda Airport. Ground operations involve a wide range of vehicles and systems that must function reliably under varying conditions, often with limited tolerance for failure.

Within this environment, even seemingly straightforward operational requirements can become complex in practice.

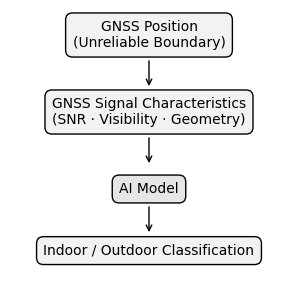

One such requirement is the automatic enforcement of speed limitations when vehicles enter indoor areas, such as baggage handling zones. Traditionally, this type of control relies on GNSS-based geofencing, where vehicle position is used to determine when speed restrictions should be applied.

At the latitude of Stockholm Arlanda Airport, this approach proved unreliable.

GNSS accuracy is affected by satellite geometry, signal obstruction, and environmental conditions. In practice, positional errors of several meters were common, particularly near buildings and transitions between indoor and outdoor areas. This made it difficult to determine, with sufficient confidence, whether a vehicle had entered a restricted zone.

In practice, this meant that a system based purely on position could not reliably distinguish between being just outside a building and already inside it. For a control system that depends on precise transitions, this ambiguity was unacceptable.

The consequence was not simply reduced precision.

It introduced operational risk, which led to the exploration of alternative approaches.

Cloud-based solutions were not viable, due to limited connectivity in indoor environments and the requirement for immediate, deterministic responses. Introducing additional infrastructure, such as beacons, would have added complexity to an already busy operational setting.

The challenge, therefore, was not one of positioning alone.

It was one of classification — determining whether a vehicle was operating indoors or outdoors, under constrained conditions and with strict reliability requirements. This problem was addressed through the introduction of an AI-based approach, developed in collaboration with Neuton.ai.

Rather than attempting to improve positional accuracy, the solution focused on interpreting the characteristics of the GNSS signal itself. By analyzing data such as satellite visibility, signal strength, and signal geometry, it became possible to infer environmental context — distinguishing between indoor and outdoor operation based on how the signal behaved.

The resulting model was implemented as a compact classification library, designed to operate efficiently on constrained hardware. It was integrated directly into the existing application running on an ATmega2560 microcontroller, where it could perform real-time inference as part of the system’s normal operation.

This allowed classification decisions to be made immediately, without reliance on external systems, ensuring that safety-related actions could be triggered deterministically and without delay.

Figure 17: Signal Based Classification (GNSS) Using AI Model

This integration was performed incrementally, with the AI functionality introduced alongside existing services, including safety controls, operational data collection, and driver identification mechanisms. This allowed the system to evolve without disrupting established behavior or requiring a redesign of the overall architecture.

In parallel, communication, security, and lifecycle management were handled independently. These responsibilities were managed through a separate execution context, ensuring that updates, connectivity, and device governance could evolve without interfering with application behavior.

This separation allowed the AI functionality to be developed and deployed without introducing additional operational risk.

Within the broader system, RIoT Secure operated as a component of a larger solution, contributing secure communication, device management, and lifecycle control, while hardware and edge compute capabilities were provided through collaboration with GreatSway Enterprises.

This arrangement reflects a practical deployment model.

Different components of the system are provided by different stakeholders, each focusing on their area of expertise, while maintaining clear boundaries between application logic and supporting infrastructure. The result was a system capable of making real-time, safety-critical decisions directly at the edge.

It operated without reliance on continuous connectivity, avoided the need for additional infrastructure, and remained compatible with the constraints of the existing deployment. More broadly, this use case illustrates how intelligent functionality can be introduced into operational systems without requiring fundamental redesign.

When responsibilities are separated and lifecycle management is treated as infrastructure, new capabilities can be added incrementally, allowing systems to evolve while maintaining stability over time. This makes it possible to deploy AI in environments where devices are constrained, physically distributed, and expected to operate reliably over extended lifetimes.

| Area | Description |

|---|---|

| Deployment Context | Airport ground operations within a safety-critical environment |

| Primary Objective | Indoor/outdoor vehicle classification for automated speed control |

| AI Workload | Real-time GNSS signal classification using embedded AI inference |

| Hardware Architecture | Embedded microcontroller deployment with separated lifecycle and communication control |

| Application Platform | ATmega2560-based embedded system |

| Lifecycle & Connectivity | Independent communication and lifecycle management execution context |

| RIoT Secure Components | µTLS, OASIS, FUSION |

| Operational Challenge Addressed | Reliable real-time classification under constrained connectivity and operational conditions |

| Key Outcome | Enabled deterministic edge decision-making without reliance on cloud infrastructure |

What These Deployments Demonstrate¶

The deployments described previously take place in different environments and address different types of problems.

One is situated within an industrial robotics context, where the focus is on enabling AI development to operate within constrained embedded systems. The other operates in a safety-critical airport environment, where reliability, determinism, and long-term operation are essential.

Despite these differences, the underlying challenges share a common structure.

In both cases, the development of AI models was not the primary obstacle. The ability to train, refine, and adapt models already existed within the respective teams. What proved more difficult was integrating these models into systems that were expected to operate reliably over time, under real-world constraints, and without introducing additional operational risk.

This distinction is important.

It suggests that the challenge of Edge AI extends beyond computation or model design. It is closely tied to how systems are structured, how responsibilities are distributed, and how change is managed once a system has been deployed.

In each deployment, a clear separation between application logic and supporting infrastructure played a central role. The components responsible for communication, security, and lifecycle management were isolated from the logic that defined application behavior. This made it possible for each part of the system to evolve independently, without requiring coordinated changes across unrelated concerns.

This separation also influenced how new functionality could be introduced.

Rather than requiring a complete redesign, AI capabilities were added alongside existing services. The systems were extended, rather than replaced. This allowed new behavior to be incorporated incrementally, reducing both technical complexity and operational disruption.

At the same time, controlled execution ensured that these additions did not compromise system stability. By constraining how logic interacts with the broader system, it became possible to introduce new capabilities while maintaining predictable behavior. This was particularly relevant in environments where real-time operation and reliability could not be compromised.

Lifecycle management formed the mechanism through which these changes were coordinated.

Updates could be delivered and applied without direct intervention. Device behavior could be observed over time. Policies governing how systems evolve could be enforced consistently across deployments. This provided a structured way to manage systems that were expected to operate over long periods, often in environments where physical access was limited.

These elements — separation, controlled execution, and lifecycle management — did not operate independently.

They reinforced one another. Separation made it possible to isolate responsibilities. Controlled execution ensured that interactions remained predictable. Lifecycle management provided a means to coordinate change over time. Taken together, they enabled systems to evolve in a controlled manner, without compromising stability or increasing risk.

The significance of these observations extends beyond the specific deployments described here. They point toward a broader requirement for Edge systems that are expected to support dynamic functionality, long lifetimes, and operation under real-world constraints. In such environments, the ability to introduce change safely becomes as important as the functionality itself.

Transitioning Forward¶

The deployments described illustrate how the concepts introduced earlier can be applied in practice. They show how systems can be structured to support evolving functionality, how workloads can be introduced without disrupting existing behavior, and how devices can be managed over time under real-world conditions.

At the same time, they reflect a particular stage in a broader transition.

The ability to deploy AI at the edge, to introduce new functionality incrementally, and to manage systems over extended lifetimes is no longer limited to isolated experiments. These capabilities are beginning to appear within production environments, where they must coexist with established processes, operational constraints, and long-term reliability requirements.

This creates a shift in perspective.

The question is no longer whether such systems can be built. It is how they can be adopted more broadly, and how organizations can transition from existing approaches toward models that support continuous evolution. This transition is not purely technical.

It involves changes in how systems are designed, how responsibilities are distributed, and how teams interact with the devices they deploy. It also requires a reconsideration of how lifecycle management is treated — not as a supporting function, but as an integral part of the system.

The examples presented here provide a point of reference.

They demonstrate that it is possible to introduce new capabilities within constrained environments, to operate systems reliably over time, and to evolve functionality without compromising stability.

What remains is to consider how these approaches can be applied more widely. This includes understanding how such systems can be integrated into existing infrastructures, how they can be scaled across larger deployments, and how the underlying principles can be adapted to different domains and requirements.

The next stage builds on this foundation, moving from individual deployments toward broader patterns of adoption, and examining how these models can be applied across a wider range of use cases and environments.