Business Impact¶

From Technical Debt to Operational Risk¶

In the early stages of development, trade-offs are inevitable.

Systems are built to validate ideas, demonstrate functionality, and move quickly toward deployment. Decisions are made with speed in mind — simplifying architecture, combining responsibilities, and postponing concerns that are not immediately critical.

At this stage, these choices are often reasonable. They allow teams to make progress. They reduce initial complexity. They help bring a system to life. But these decisions do not remain isolated.

As systems move from prototype to deployment, and from deployment to operation, the impact of these early trade-offs begins to surface. What was once a shortcut becomes a dependency. What was once acceptable becomes a limitation.

Over time, these limitations accumulate.

In Edge environments, this accumulation has a different character. Devices are deployed into the field, often in large numbers and in locations where direct access is limited. Once operational, they are expected to perform reliably over extended periods of time, under changing conditions and evolving requirements. In this context, unresolved technical debt does not remain a technical concern.

It becomes an operational risk.

A tightly coupled system that is difficult to update becomes a system that is difficult to maintain. A device that cannot be easily monitored becomes a device whose failures persist longer than they should. A system that lacks clear boundaries becomes harder to secure and harder to control.

These are not abstract issues. They affect uptime. They affect service quality. They affect the ability to respond to change. As deployments scale, the impact becomes more pronounced. What may be manageable across a small number of devices becomes increasingly difficult across hundreds or thousands.

Manual intervention becomes impractical. Inconsistencies begin to emerge. The system behaves differently across environments, making it harder to diagnose and resolve issues. Operational overhead increases — not because the system is doing more, but because it is harder to manage.

At this point, the distinction between technical and operational concerns begins to disappear. The system is no longer judged solely by what it can do, but by how reliably it can continue to do it.

This is where technical debt changes form. It is no longer measured in code complexity or development effort. It is measured in downtime, maintenance cost, and the inability to adapt.

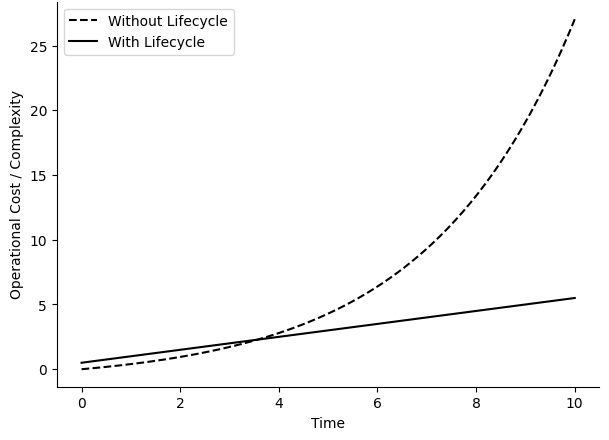

Figure 12: Operational Cost Over Time

It becomes significantly harder to address — it is no longer in development; it is in operation.

The Cost of Inability to Update¶

All systems change over time.

Requirements evolve, vulnerabilities are discovered, and AI models are refined as new data becomes available. What is deployed today is rarely what needs to run tomorrow. In most environments, this is expected. Systems are updated, improved, and adjusted as part of normal operation.

At the edge, this expectation remains — but the reality is often different. Updating distributed devices is not trivial. Connectivity may be intermittent. Devices may not be reachable at the same time, or at all. Systems may be in unknown or inconsistent states. Each update introduces uncertainty — not only in delivery, but in outcome.

As a result, updates are often delayed, simplified, or avoided.

This is where the cost begins to emerge. A system that cannot be reliably updated becomes fixed at the moment of deployment. Any improvements made after that point — whether functional, performance-related, or security-critical — remain out of reach.

The gap between what the system does and what it needs to do continues to grow.

For AI-driven systems, this gap is particularly significant.

Models are not static. They depend on data, context, and continuous refinement. Without the ability to deploy updated models, systems cannot adapt to changing conditions. Performance degrades, accuracy declines, and the value of the system diminishes. What was once effective becomes outdated.

The same applies to security.

Vulnerabilities are not theoretical — they are discovered regularly. Without a reliable mechanism to deploy patches, these vulnerabilities persist in the field. The longer they remain unresolved, the greater the exposure. In this context, risk is not introduced by new threats. It is sustained by the inability to respond to them.

Operationally, the consequences extend further.

When updates cannot be applied remotely, alternatives must be considered. Manual intervention becomes necessary. Devices may need to be serviced, replaced, or physically accessed. This introduces cost — not only in terms of resources, but in time and disruption.

At scale, these costs compound quickly. There is also a loss of flexibility. Systems that cannot be updated cannot evolve. New features cannot be introduced. Behavior cannot be adjusted. The system becomes constrained by its initial design, regardless of how requirements change. This leads to a simple outcome.

A system that cannot be updated cannot be maintained — and as a result cannot be relied upon over time.

Security as a Business Constraint¶

Security is often discussed as a technical requirement. It is addressed through encryption, authentication, and protective measures designed to reduce risk. These mechanisms are essential, and in many cases, they are implemented with care and precision. But security does not operate in isolation.

It influences what systems are allowed to do — and where they are allowed to operate.

In Edge environments, this influence becomes more visible. Devices are deployed in public or semi-public spaces. They interact with physical processes, sensitive data, and operational systems.

The consequences of compromise extend beyond data loss — they can affect service continuity, safety, and trust. As a result, security becomes a condition for deployment.

This has practical implications.

Systems that cannot be secured to an acceptable level are not deployed. Systems that cannot demonstrate resilience are restricted in where they can operate. Systems that introduce uncertainty are avoided altogether.

In these cases, the limitation is not technical capability.

It is the ability to meet security expectations.

Architecture plays a central role in this. In tightly coupled systems, where multiple concerns share the same environment, exposure is broader. A vulnerability in one component may provide access to others.

The boundaries between functions are unclear, making it harder to contain issues or enforce control. In this context, securing the system becomes more complex — not because individual components are inherently insecure, but because their interactions are difficult to isolate.

This complexity affects decision-making.

Additional safeguards are introduced. Reviews take longer. Deployment timelines extend. In some cases, projects are delayed or reconsidered entirely. Security does not stop progress, but it shapes its pace and direction.

At scale, this influence becomes more pronounced.

Organizations must consider not only whether a system works, but whether it can be trusted to operate reliably over time. This includes the ability to respond to vulnerabilities, enforce boundaries, and maintain control across distributed environments.

Without these assurances, adoption becomes more difficult. This leads to a broader realization. Security is not simply a feature that can be added to a system. It is a property that emerges from how the system is designed.

When architecture reduces exposure and defines clear boundaries, security becomes more manageable. When responsibilities are isolated and interactions are controlled, risk can be contained more effectively.

In these cases, security supports deployment rather than limiting it.

The Hidden Cost of Complexity¶

Complexity in Edge systems rarely appears as a single, identifiable problem. Instead, it develops gradually, as systems evolve to meet new requirements and adapt to changing conditions.

What begins as a relatively straightforward design becomes layered over time, as additional functionality, integrations, and constraints are introduced. Individually, these additions are often reasonable.

A new feature addresses a specific need. An integration enables broader functionality. A modification improves performance or behavior in a particular scenario. Each step is justified in isolation, and the system continues to function.

The challenge emerges in how these elements interact.

As systems grow, complexity is no longer defined by the number of components they contain, but by the relationships between those components.

Dependencies become more numerous, assumptions become less explicit, and behavior becomes harder to predict. Changes that once had localized impact begin to influence other parts of the system in subtle and sometimes unexpected ways.

In tightly coupled environments, this effect is amplified.

When multiple concerns share the same execution context, the boundaries between them become less distinct.

A change intended to address one requirement may introduce side effects elsewhere, not because the components are flawed, but because their interactions are difficult to fully anticipate.

Over time, this changes how systems are maintained.

Introducing new functionality requires more extensive validation, as the potential impact of each change becomes harder to isolate.

Coordination between teams increases, as different areas of responsibility intersect more frequently. Decisions are made more cautiously, not out of preference, but out of necessity.

This has a direct impact on how quickly systems can evolve.

Effort shifts away from building new capabilities and toward understanding and managing existing complexity. Development cycles extend, and progress becomes more dependent on deep familiarity with the system’s behavior rather than clear structural boundaries.

At scale, this creates a form of friction that is not always immediately visible.

Systems continue to operate, but the effort required to maintain and extend them increases steadily. Innovation is still possible, but it requires more time, more coordination, and more care.

This is the hidden cost of complexity — measured not only in system performance or resource consumption, but in the effort required to sustain progress over time.

Complexity is not defined by what a system contains, but by how its parts interact.

From Deployment to Lifecycle Operations¶

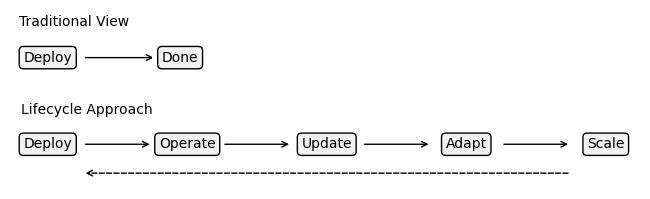

For many systems, deployment is treated as the primary milestone.

It marks the point at which a system moves from development into the real world, where it begins to deliver value. Significant effort is invested in reaching this stage — designing the system, implementing functionality, validating performance, and preparing it for release.

Once deployed, the system is often considered complete.

In Edge environments, this assumption does not hold in the same way. Deployment is not an endpoint, but a transition. It is the moment at which responsibility shifts from building the system to operating it over time. From that point forward, the system is no longer defined solely by what it was designed to do, but by how well it can continue to perform under changing conditions.

These conditions are rarely static.

Operational environments evolve, requirements change, and new information becomes available. AI models require refinement as data patterns shift. Security vulnerabilities emerge and must be addressed. Performance expectations adjust as systems scale.

In this context, a system that remains unchanged does not remain stable. It becomes increasingly misaligned with its environment. This changes the nature of system ownership. Responsibility extends beyond initial functionality to include ongoing reliability, adaptability, and control. The system must be monitored, maintained, and updated in a way that ensures continuity without disruption.

The focus moves from delivery to durability.

This shift introduces new requirements. Systems must support secure and reliable updates. They must provide sufficient visibility into their behavior. They must allow changes to be introduced without compromising stability.

These capabilities are not secondary — they are essential to sustaining operation as systems evolve. Without them, the burden shifts elsewhere.

Manual intervention becomes necessary. Devices must be accessed, serviced, or replaced to introduce changes or resolve issues. As deployments grow, this approach becomes increasingly impractical, both in terms of cost and operational effort.

At scale, the distinction becomes clear:

deployment is a moment, while operation is a continuous process.

Systems that are designed only for deployment can function initially, but they struggle to adapt. Systems that are designed for lifecycle operation are able to evolve, respond to change, and maintain alignment with their environment.

Figure 13: Traditional vs Lifecycle Approach

This is where the perspective changes. Success is no longer defined by whether a system can be deployed — it is whether it can be sustained.

Enabling Scalable Edge AI¶

The challenges associated with Edge systems — maintaining control, managing updates, ensuring security, and handling complexity — are often treated as separate concerns. Each is addressed individually, through targeted solutions that aim to reduce friction in specific areas.

In practice, however, these challenges are closely connected.

The ability to update a system depends on how it is structured. The ability to secure it depends on how responsibilities are separated. The ability to scale it depends on how complexity is managed over time.

Each of these factors influences the others, and together they determine how a system behaves beyond its initial deployment.

This interconnectedness becomes more apparent as systems grow.

A small deployment may tolerate limitations in one area without immediate consequence. As the number of devices increases, and as their role within the system expands, these limitations become more difficult to manage.

What was once an isolated issue becomes a systemic constraint. At this stage, scaling is no longer defined by the number of devices that can be deployed. It is defined by the ability to operate them reliably as deployments scale.

For Edge AI systems, this distinction is particularly important. The value of these systems is not only in their ability to process data locally, but in their ability to adapt.

Models must be refined, behavior must be adjusted, and performance must be maintained as conditions change.

Without the ability to support this ongoing evolution, the effectiveness of the system diminishes, regardless of how well it performed initially.

This is where architecture plays a defining role.

When systems are designed with clear separation, controlled interaction, and lifecycle management in mind, they are better positioned to support continuous operation.

Updates can be introduced without disrupting functionality. Security can be maintained without limiting deployment.

Complexity can be managed without slowing progress.

These capabilities do not operate independently — they reinforce each other.

The result is a system that can evolve in place. One that remains aligned with its environment, even as that environment changes. One that can be extended, improved, and maintained without requiring fundamental redesign.

This is what enables scalable Edge AI — not simply the ability to deploy models at the edge, but the ability to manage them as part of a system that continues to operate, adapt, and improve over time.

The value of Edge AI lies not in what it does once, but in how it evolves across the lifecycle.